Remember when AI video was mostly about cool experiments? Well, hold onto your seats, because the game has changed dramatically! As we close out 2025, the AI video field has hit a critical milestone: the successful commercialization of true unified multimodal control.

This isn’t just about generating a video from text anymore. We’re now talking about a single, powerful AI brain that can create, edit, and modify entire scenes with unprecedented accuracy and consistency. It’s a leap that promises to redefine content creation for marketing, but also amplifies the urgent need for smart governance.

What’s New: The All-in-One Creative Powerhouse

The biggest update since late November is the official launch and real-world application of models like Kling Video O1 and significant advancements from players like Runway. These tools are now delivering what was once a distant dream:

-

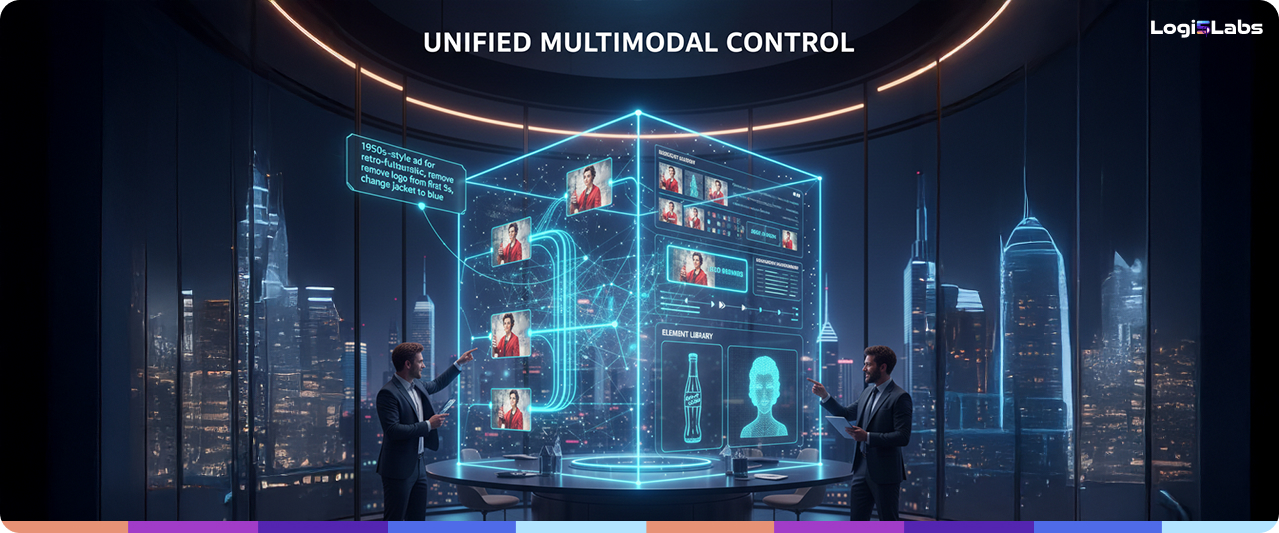

Single-Engine Workflow: Imagine eliminating the traditional video assembly line. Models like Kling Video O1 integrate generation, editing, inpainting (removing unwanted objects), restyling, and video extension into one cohesive system. This means a single AI “brain” handles the entire creative lifecycle, from concept to final cut.

-

Conversational Editing is Here: Post-production is no longer a maze of complex software. Now, you can simply tell the AI to “remove bystanders,” “change daytime to dusk,” or “replace the main character’s outfit” using plain text commands. This unlocks incredibly fast, granular control over every pixel without needing specialized skills.

-

Industrial-Grade Consistency: This is a game-changer for brands! Kling O1 now includes an “Element Library” where you can upload multiple reference images for characters, products, or props. The AI “locks in” this identity, maintaining consistent appearances across different shots, angles, and scenes. No more “object drift” or characters changing mid-video – this is crucial for professional marketing content.

-

New Benchmarks for Realism: Parallel to unified control, models like Runway’s Gen-4.5 are pushing the boundaries of pure realism. Launched in early December, Gen-4.5 immediately grabbed top spots in major benchmark tests, showcasing incredible improvements in simulating real-world physics. Objects move with believable weight, liquids flow plausibly, and motion dynamics are smoother, making AI-generated content virtually indistinguishable from live-action.

The Game-Changing Impact for Marketers

This new era of unified multimodal control means:

-

Unprecedented Speed & Scale: Generate dozens of highly personalized video ads, product demos, or social media clips in minutes, not weeks. Test endless creative variations to find what truly resonates with your audience.

-

Creative Freedom: Experiment with complex visual concepts and intricate edits that were previously too costly or time-consuming.

-

Global Reach: Rapidly adapt content for different markets, changing languages, cultural elements, and even specific actors with ease.

The Critical Catch: A New Governance Imperative

While exciting, this leap forward also introduces a significant new challenge: The AI is now making complex creative and compliance decisions autonomously. When a single AI can generate and edit based on a vague prompt, the risk of brand inconsistency, misinformation, or compliance errors skyrockets.

This means vendor-agnostic validation is no longer just a good idea – it’s absolutely essential. Your brand needs an independent AI Safety Net that can monitor and validate the outputs from any of these powerful new platforms (Kling, Runway, Adobe, Google, etc.) to ensure every frame, every edit, and every word aligns perfectly with your brand guidelines and regulatory requirements.

The AI video revolution is here, and it’s powerful. But harnessing that power safely means putting robust, independent governance in the driver’s seat.